Projects & Initiatives

Corona Data Scraper

It happened suddenly, and yet it was expected. In March, following a rapid increase in the number of cases of COVID-19 in British Columbia, my university announced they would be suspending in-person classes until further notice. The day the announcement was made was a surreal one. I was faced with the reality that things would not return to normal for a long time and that we would have to stay confined for the next few months at least.

Stuck at home, I tried to find a way to use my skills to help with the current situation. At the time, the only significant source of COVID-19 statistics came from the John Hopkins COVID-19 dashboard. This project was having difficulty keeping with the rise in cases globally. While browsing this project's GitHub repository, I stumbled on a new project attempting to address the flaws in the John Hopkins dataset, called coronadatascraper.

coronadatascraper was created by Larry Davis, an engineer at Adobe. The goal of this project was to provide an alternative to the John Hopkins dataset by scraping official government sources from around the world.

With the correct infrastructure, the scrapers could run each day, allowing for a regularly updated dataset tracking the COVID-19 pandemic. As the code for each scraper was open source, one could quickly verify the origin of the COVID-19 data for a particular region.

I joined the project as its second GitHub maintainer. At its peak, the project was maintained by a team of around 7-9 active maintainers, and a total of 57 contributors provided pull requests to the project over its lifetime. This project also caught the attention of Amazon, which supported the project by awarding it $15,000 in AWS credits.

Covid Atlas was the successor of coronadatascraper, which took advantage of AWS to run the scrapers and improved access to the COVID-19 dataset through a new landing page. Unfortunately, the project saw less participation, as the initial interest to participate in some COVID-19 related project waned.

Architecture

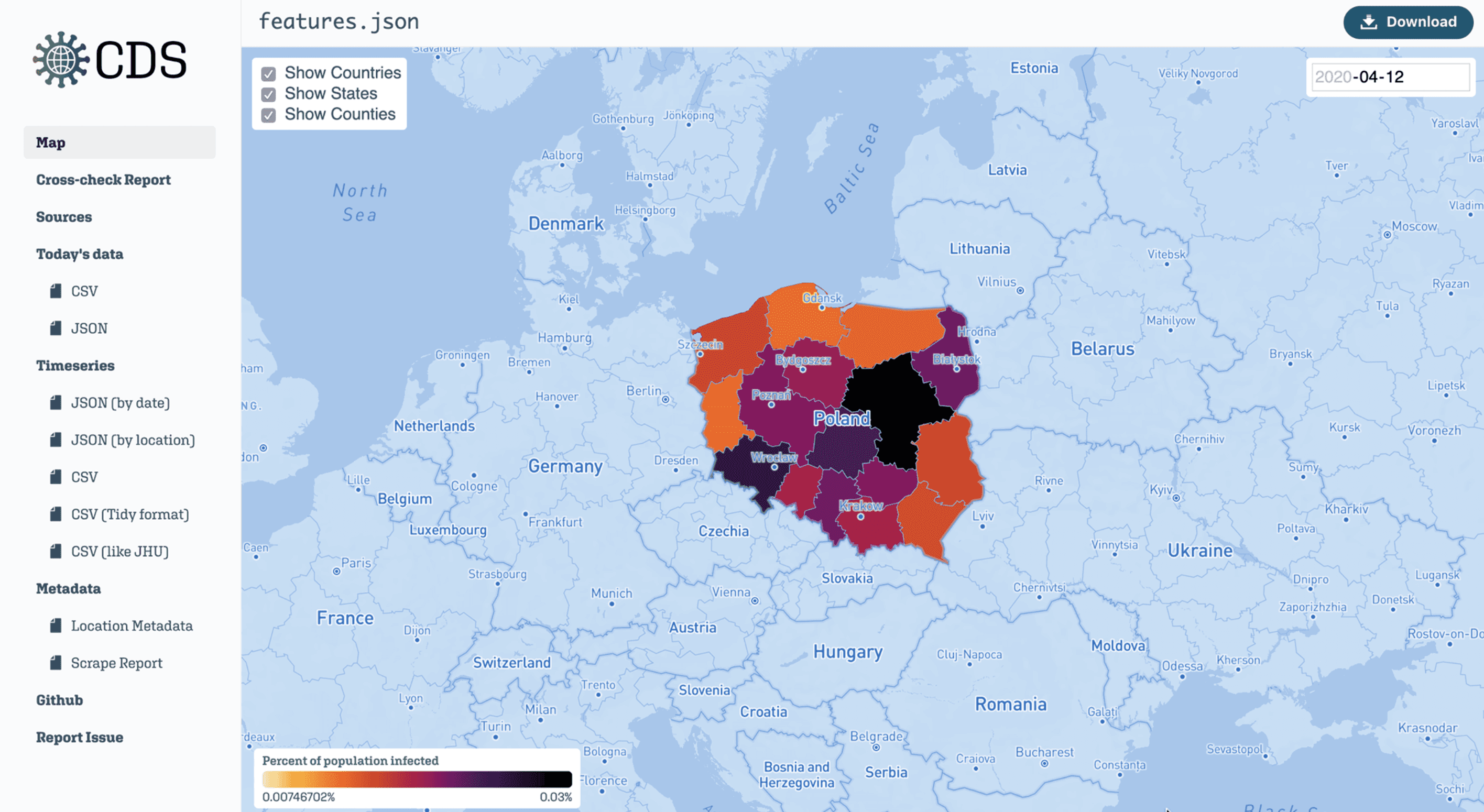

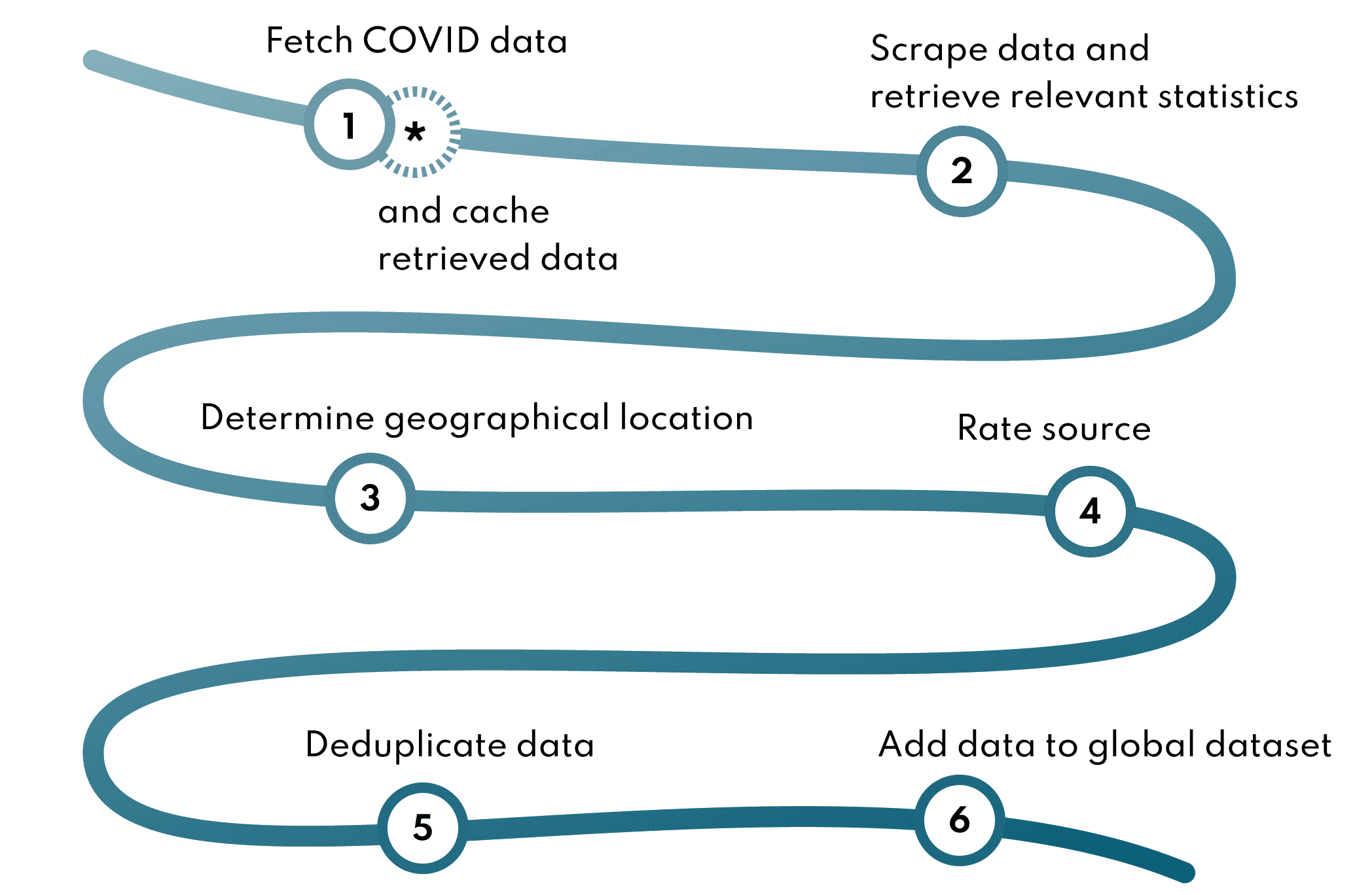

coronadatascraper generated a daily snapshot of COVID-19 cases from around the world by scraping official government websites. Every day, a script would run a series of web scrapers and compile the COVID-19 data produced into a time series CSV organized by geographic locations.

The community contributed scrapers through pull requests to the project. These scrapers made use of a shared API and structure to simplify their development.

Data sources

We relied on government websites to track the case count for a particular region. The project also tracked hospitalized, discharged, and recovered cases, fatalities, and total tests administered.

We prioritized tracking statistics at the local level.

Government sources came in 5 different data types:

- HTML pages

- HTML pages requiring Javascript to work

- Tableau or ArcGIS dashboards

- CSV or JSON files

- Images or PDF files (the absolute worst kind 🤮)

I will quickly mention our strategy for supporting each datatype in a sentence:

- HTML pages: Cheerio

- HTML pages requiring Javascript to work: Pupeteer running in a headless Chrome browser to run Javascript + Cheerio when the page is fully loaded

- Tableau: Exporting the page as PDF and parsing the file, or using a hidden API (when found)

- ArcGIS: Using the ArcGIS API

- CSV or JSON files: processing the file as a JSON array

- PDF: parsing the file using a custom-built library relying onpdf.js

- Images: reaching out to the government agency to request that they change their data reporting practices.

Caching

Some scrapers also introduced miscounts, meaning the generated COVID-19 data was not correctly reflecting the data provided by the government source.

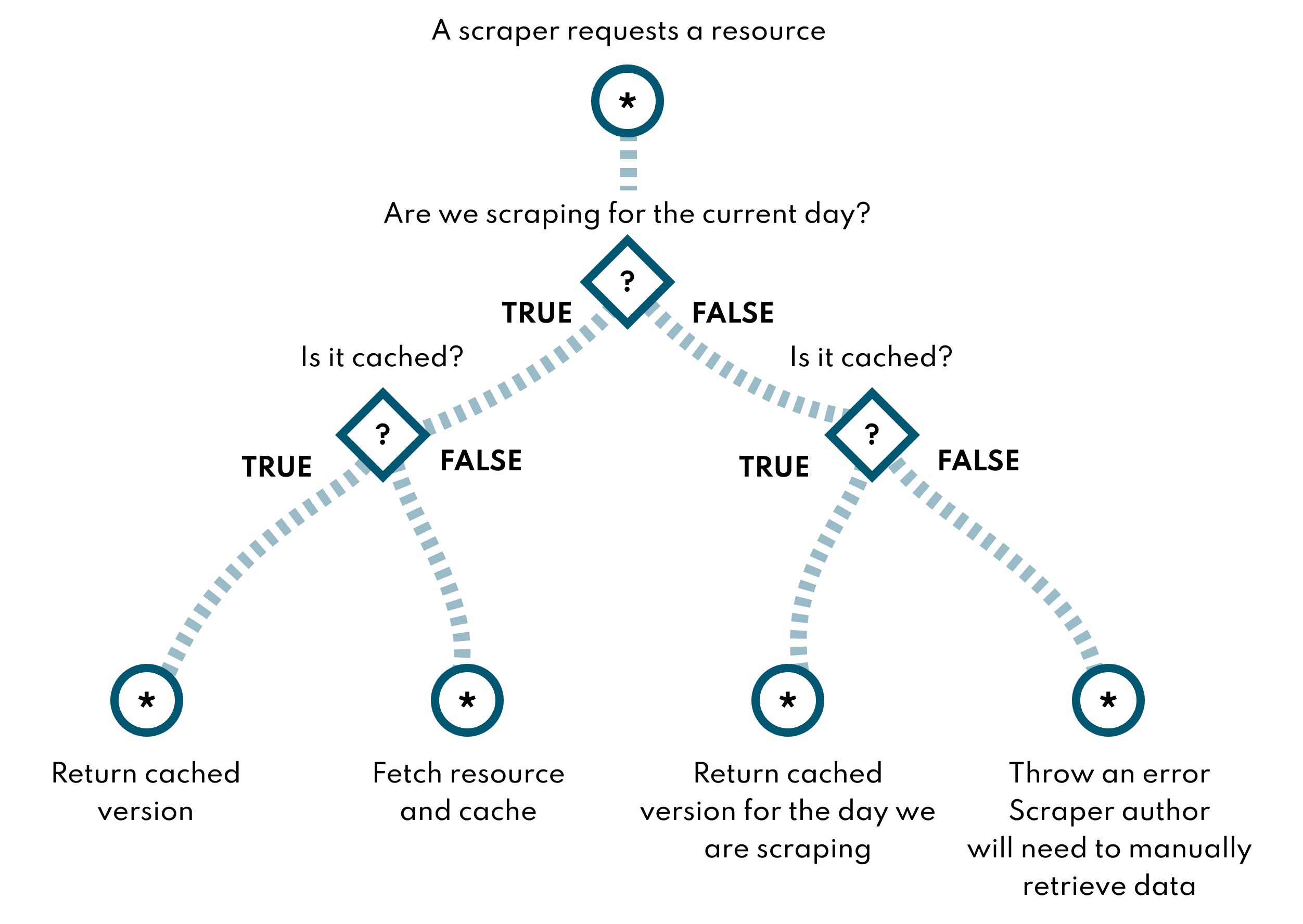

To address these issues, we cached all pages fetched by each scraper. This way, if a scraper failed, the scraper could be fixed and could then be run on the cached page if the original page was no longer available.

By caching, we also avoided the possibility of overwhelming government sources with excessive requests, causing a denial of service to an essential resource during a pandemic.

We ran all the scrapers once for all days between January 21 to the current day. For each day, we supplied the cached version of the requested government source to the scraper if available. If we did not have a cached version of the source for that day, we threw an error. This left a blank row for that day and location in the final dataset. The resulting dataset would cover the entire progress of the pandemic and would include the latest scraper fixes.

Assigning case count to a particular geographic location

All data scraped was assigned to a geographical location. Data attached to a smaller geographic area was aggregated to form a data point for the corresponding high-level geographic entity (eg. all county-level data points were aggregated to form the state data point).

We provided several strategies for scraper authors to link the data produced by their scraper to a geographical location. Scraper authors could specify the city, county, state, or country the scraped data originated from. Alternatively, longitude and latitude information could be given to the scraper runner, which would be used to select the corresponding geographical entity. With a location found, the scraper runner would find a corresponding GeoJSON and population count for each location using data from the ISO-3166 Country and Dependent Territories Lists with UN Regional Codes as well as country-specific regional data.

These two strategies were found not to scale very well as scrapers for different countries were added to the project. Non-anglophone countries proved challenging as government sources listed regions in the country's native language. Some regions also had multiple names (a short and long name for example), and the scraper runner may not have the information associated with a particular regional name.

We developed an alternative approach based on the ISO-3166-1 and ISO-3166-2 standard. Scraper author could supply the ISO-3166-1 or ISO-3166-2 code of the location they were scaping. Thanks to the work of a project contributor, Hyperknot, we could retrieve the GeoJSON and population data for all ISO codes, with this data scraped from WikiData. The country-levels repository is still available online and could prove valuable for any future location-dependent projects.

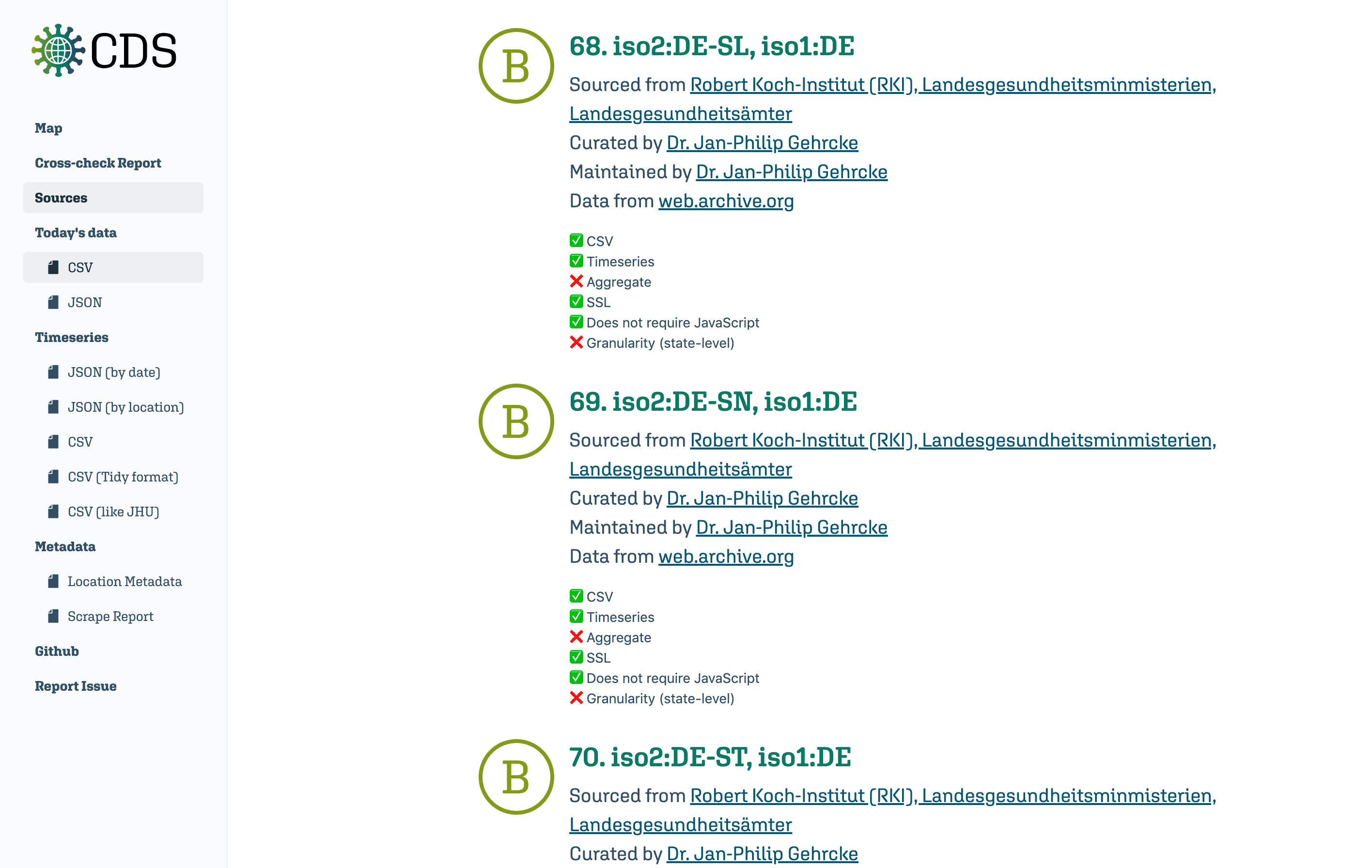

Rating sources

The scraper runner graded all government sources against a list of attributes. We looked to to identify the sources least conducive to scraping and notify the source authors of the issues with their reporting practice.

We rated sources on the following factors:

- Completeness of the data provided — includes daily data points for the number of confirmed, hospitalized, discharged, and recovered cases; fatalities; total tests administered.

- Granularity — data is provided at the most local level possible.

- Machine-readability — data is in JSON or CSV, or another format easily parseable by a computer. The website is SSL encrypted using a well-known SSL certificate.

Deduplicating data

The scrapper runner supported multiple sources and scrapers for the same geographical location. This allowed us to rely on the John Hopkins dataset as we grew the number of scrapers available for example. However, only one data-point could be included in the final dataset. We used the rating of each source to select which source to include in the final dataset.

If a source provided an incomplete set of statistics (for example missing hospitalized counts), it could be supplemented with additional sources to retrieve the missing statistics.

This was a high-level overview of the project's architecture. If you want to learn more about how we implemented coviddatascraper, feel free to read the project documentation!